In this post, You going to learn how you can easily build a Neural network with just 9 lines of Python code. If you are new to this subject, I highly recommend you to get a basic understanding of Deep Learning.

Let’s get started!

What is a Neural Network?

A series of algorithms, specially designed to recognize patterns. The sensory data is interpreted through machine perception. It may be clustering or labeling raw inputs. Numbers, vectors, such patterns are interpreted into real-world data. Images, sound text, time series must be translated.

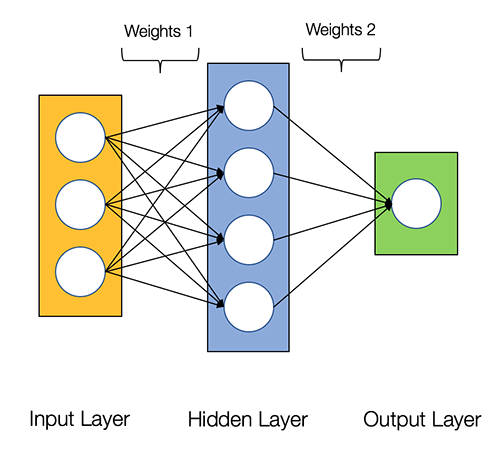

The link between two nodes is called a Synaptic link. Initially, the input data will find the right link to provide the output node which is called “Thinking”(sense).

Learn how it is made mathematically from here.

We’re going to build Neural Network with fewer data.

from numpy import exp, array, random, dot train_i = array([[0, 0, 1], [1, 1, 1], [1, 0, 1], [0, 1, 1]]) train_o = array([[0, 1, 0, 1]]).T random.seed(1) link_w = 2 * random.random((3, 1)) - 1 for iteration in xrange(100): output = 1 / (1 + exp(-(dot(train_i, link_w)))) link_w += dot(train_i.T, (train_o - output) * output * (1 - output)) print (1 / (1 + exp(-(dot(array([1, 1, 0]), link_w)))))

Data for Training

Three inputs and one output accordingly, We have four sets of I/O data is below,

- 0 0 1 0

- 1 1 1 1

- 1 0 1 0

- 0 1 1 1

Input for the Test

- 1 1 0 ?

Now, We have the sense to find the output for E

How?

As you can see the output of the training data is just as same as the middle of input data but multiple combinations of inputs are also there. Now let’s take a look with the lines of code,

Line #01

from numpy import exp, array, random, dot

For matrices calculation, we call the package numpy in python language.

You can also see a single node(neuron) mathematical computation from here.

But how to give a sense for our model with the correct answer?

- Get the Training data as input(train_i) and do the dot matrics with the random link(synapses) weight(link_w) to find the output by sigmoid activation function (output)

- Calculate the error with the simple mathematical operation.

- The output is delivered.

Training I/O data

Line #02

train_i = array([[0, 0, 1], [1, 1, 1], [1, 0, 1], [0, 1, 1]])

Line #03

train_o = array([[0, 1, 0, 1]]).T

You have to give the link_w(link weight) to the input node, which can be either a positive or a negative number. Each node-link with the next layer along with a random number is link_w(synapses).

To generate random weight using a random function (numpy).

Line #04

random.seed(1)

Line #05

link_w = 2 * random.random((3, 1)) - 1

Now, create the initial link_w(synapses) with random numbers both negative and positive.

Line #06

for iteration in xrange(1000):

Line #07

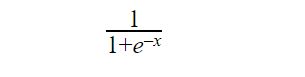

output = 1 / (1 + exp(-(dot(train_i, link_w))))

Line #08

link_w += dot(train_i.T, (train_o - output) * output * (1 - output))

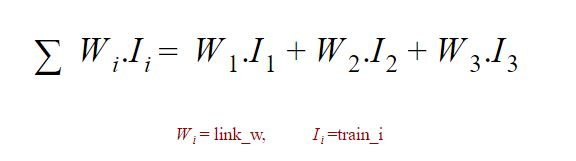

Using the Dot product in matrices, you should do a basic calculation to find the next layer with the sense node(neuron). Here is how you can do it.

1. dot(train_i, link_w) 2. 1 / (1 + exp(-(dot(train_i, link_w))))

2. 1 / (1 + exp(-(dot(train_i, link_w))))

3. output = 1 / (1 + exp(-(dot(train_i, link_w))))

3. output = 1 / (1 + exp(-(dot(train_i, link_w))))

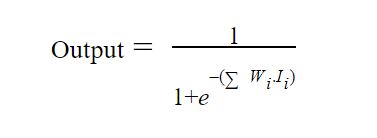

The Output is 11+e-( Wi.Ii)

The Output is 11+e-( Wi.Ii)

Output for the next node(neuron) repeats iteration to make sense to identify the correct answer (output) by calculating an error.

Adjust weight depends on error.

link_w += dot(train_i.T, (train_o - output) * output * (1 - output))

In numpy ‘.T’ function represents, transposes the matrix so our input should be like this for calculation.

| INPUT | OUTPUT |

|---|---|

| 0 0 1 | 0 |

| 1 1 1 | 1 |

| 1 0 1 | 0 |

| 0 1 1 | 1 |

Line #09

print (1 / (1 + exp(-(dot(array([1, 1, 0]), link_w)))))

Output – 0.98807249

Result of input test data – 1 1 01

Congratulations on getting this far! Happy learning.

Agira provides various Mobile and Web Development Services. You can hire our dedicated Developers to transform your Business.